Assemblage, Expédition et Support de toutes les commandes depuis l'Union Européenne

Assemblage, Expédition et Support de toutes les commandes depuis l'Union Européenne

Assemblage, Expédition et Support de toutes les commandes depuis l'Union Européenne

Assemblage, Expédition et Support de toutes les commandes depuis l'Union Européenne

Our NVIDIA artificial-intelligence solutions can be configured with NVIDIA's latest range of Tensor Core and RTX GPU cards.

Broadberry's Tensor Core range of AI/HPC servers, powered by NVIDIA Tensor Core H100 provides exceptional speed for the most advanced elastic data centers, supporting AI, data analytics, and high-performance computing (HPC) tasks. Its Tensor Core technology accommodates various math precisions, making it a versatile accelerator for all computing workloads. The H100 PCIe supports double precision (FP64), single precision (FP32), half precision (FP16), and integer (INT8) computations.

The NVIDIA H100 card is a dual-slot, 10.5-inch PCI Express Gen5 card built on the NVIDIA Hopper™ architecture. It uses a passive heat sink for cooling, relying on system airflow for proper operation within thermal limits. The H100 PCIe can function at its maximum thermal design power (TDP) of 350 W, delivering accelerated performance for applications requiring high computational speed and data throughput. With a world-leading PCIe card memory bandwidth exceeding 2,000 gigabytes per second (GBps), the NVIDIA H100 PCIe significantly reduces the time needed to solve complex problems with large models and extensive datasets.

The NVIDIA H100 PCIe card includes Multi-Instance GPU (MIG) capability, allowing the GPU to be divided into up to seven hardware-isolated GPU instances. This feature creates a flexible platform for elastic data centers, enabling them to adapt to changing workload requirements. It also allows for allocating the appropriate resources for various multi-GPU tasks, ranging from small to large jobs. The versatility of the NVIDIA H100 ensures that IT managers can optimise the use of each GPU in their data center.

Unlock limitless potential with outstanding performance with the NVIDIA Ada Lovelace architecture.

The NVIDIA RTX™ 6000 Ada Generation stands as the top-tier graphics card for workstations, crafted for professionals seeking peak performance and reliability. It ensures optimal conditions for high-level design, real-time rendering, AI, and high-performance computing tasks across various industries.

Based on the NVIDIA Ada Lovelace architecture, the RTX 6000 integrates 142 third-generation RT Cores, 568 fourth-generation Tensor Cores, and 18,176 CUDA® cores with 48GB of error correction code (ECC) graphics memory. This powerful combination propels the next generation of AI graphics and petaflop inferencing performance, significantly accelerating rendering, AI, graphics, and computing tasks.

NVIDIA RTX professional graphics cards undergo certification with various professional applications, verified by top independent software vendors (ISVs) and workstation manufacturers. Supported by a global team of specialists, these cards provide peace of mind, allowing you to concentrate on essential tasks with the leading visual computing solution for critical business needs.

2U Quad Node, NVIDIA® Grace CPU Superchip, 480GB LPDDR5X with ECC (Per Node), 1+1 3000W/3600W redundant power supply, 24x 2.5" NVMe hot-swappable drive bays.

Optimised for High Performance Computing, AI, Deep Learning and Industrial Automation. AMD EPYC 9004/9005 Series Processor. Supports 8x AMD Instinct MI300X Accelerator GPUs. 5250W Redundant power supply. 8x 2.5" hot-swap NVMe drive bays

Configure SuperWorkstation with AMD Ryzen Threadripper PRO, 6 PCI-E 4.0 x16 slots, 1x 10GBase-T LAN port, 1x 1GbE LAN port (shared with IPMI), redundant power supply.

Ideal for AI, Machine Learning, Cloud Computing, Edge and Network Function Virtualisation. Up to 6x PCI-E 5.0 x16 FHFL slots, 2x PCI-E 5.0 x16 FHHL slots. Redundant 2000W Power Supply, 6x 2.5" hot-swap NVMe/SATA/SAS drive bays

Ideal for HPC, AI, Deep Learning, cloud gaming, VDI, rendering and animation. 13x PCI-E 5.0 x16 slots. Redundant 2700W Titanium Power Supply, 8x 3.5"/2.5" hot-swap NVMe/SATA drive bays

Dual 4th Gen Intel Xeon Scalable Processors, Ideal for Data Intensive HPC, In-Memory Computing, Private & Hybrid Cloud and Software-defined Storage. 2x PCI-E 5.0 x16 slots. Redundant 1600W redundant Power Supply, 16x hot-swap E3.S (7.5mm) NVMe drive bays

Optimised for High Performance Computing, AI, Deep Learning and Industrial Automation. AMD EPYC 9004 Series Processor. 3000W Redundant power supply. 24x 2.5" hot-swap NVMe/SATA drive bays

Ideal for HPC, AI, Deep Learning, cloud gaming, VDI, rendering and animation. 13x PCI-E 5.0 x16 slots. Redundant 2700W Titanium Power Supply, 24x 2.5" hot-swap NVMe/SATA drive bays

Ideal for HPC, AI, Deep Learning, cloud gaming, VDI, rendering and animation. 13x PCI-E 5.0 x16 slots. Redundant 2700W Titanium Power Supply, 24x 2.5" hot-swap NVMe/SATA drive bays

Ideal for High Performance Computing, AI and Deep Learning. 10x PCI-E 5.0 x16 slots. Redundant 3000W Titanium Power Supply, 10x 2.5" hot-swap NVMe/SATA drive bays

Ideal for High Performance Computing, AI and Deep Learning. 8x PCI-E 5.0 x16 slots. Redundant 3000W Titanium Power Supply, 6x 2.5" hot-swap NVMe/SATA drive bays

Ideal for Hyperconverged Infrastructure, Container Storage and Scale-Out File Server. Quad Node 2U Form Factor. Redundant 3000W Power Supply, 3x 3.5"/2.5" hot-swap NVMe/SATA drive bays (Per Node)

Ideal for Hyperconverged Infrastructure, Diskless HPC Cluster, High-Performance File System and Diskless HPC Clusters. Quad Node 2U Form Factor. Redundant 3000W Power Supply, 6x 2.5" hot-swap NVMe/SATA drive bays (Per Node)

Optimised for High Performance Computing, AI, Deep Learning and Industrial Automation. AMD EPYC 9004 Series Processor. 3000W Redundant power supply. 18x 2.5" hot-swap NVMe/SATA drive bays

Dual 4th Gen Intel Xeon Scalable 6444Y processor, Ideal for High Performance Computing (HPC), AI, Deep Learning, and scientific research. 4x NVIDIA A100 GPUs. Redundant 2200W Titanium Power Supply, 8x 2.5" hot-swap NVMe/SATA drive bays.

Optimised for High Performance Computing, AI, Deep Learning and Industrial Automation. AMD EPYC 9004 Series Processor. 3000W Redundant power supply. 24x 2.5" hot-swap NVMe/SATA drive bays

Ideal for HPC, AI, Deep Learning, Conversational AI & Industrial Automation. 10x PCI-E 5.0 x16 slots. Redundant 5250W Titanium Power Supply, 8x 2.5" hot-swap NVMe drive bays

Ideal for HPC, AI, industrial automation, analytics server. 8x PCI-E 5.0 x16 (LP) slots, 2x PCI-E 5.0 x8 FHFL slots. Redundant 3000W Titanium Power Supply, 20x 2.5" hot-swap NVMe/SATA drive bays

7U, Dual 4th Gen Intel Xeon Scalable Processors, Supports up to 8x NVIDIA HGX H100/H200 GPU cards, 3+3 3000W redundant power supply, 8x 2.5" NVMe & 2x 2.5" NVMe/SATA/SAS hot-swappable drive bays.

NVIDIA DGX H100 with 8x NVIDIA H100 Tensor Core GPUs, Dual Intel® Xeon® Platinum 8480C Processors, 2TB Memory, 2x 1.92TB NVMe M.2 & 8x 3.84TB NVMe U.2.

7U, Dual 5th Gen Xeon® Platinum 8558 Processors, 8x NVIDIA HGX H200 GPU cards, 4+2 3000W redundant power supply, 8x 2.5" NVMe & 2x 2.5" NVMe/SATA/SAS hot-swappable drive bays.

7U, Dual 5th Gen Xeon® Platinum 8558 Processors, 8x NVIDIA HGX H200 GPU cards, 4+2 3000W redundant power supply, 8x 2.5" NVMe & 2x 2.5" NVMe/SATA/SAS hot-swappable drive bays.

NVIDIA DGX H200 with 8x NVIDIA H200 141GB SXM5 GPU Server, Dual Intel® Xeon® Platinum Processors, 2TB DDR5 Memory, 2x 1.92TB NVMe M.2 & 8x 3.84TB NVMe SSDs.

NVIDIA DGX B200 with 8x NVIDIA Blackwell GPUs, Dual Intel® Xeon® Platinum 8570 Processors, 4TB DDR5 Memory, 2x 1.92TB NVMe M.2 & 8x 3.84TB NVMe SSDs.

NVIDIA DGX GB200 with 72x NVIDIA Blackwell GPUs, Dual Intel® Xeon® Platinum Processors, 4TB DDR5 Memory, 2x 1.92TB NVMe M.2 & 8x 3.84TB NVMe SSDs.

Over recent years there's been some massive advances made in the field of artificial intelligence, the quicker we can process complex calculations, the quicker scientists can cure life-threatening diseases that affect millions. We've been lucky enough to have been at the forefront of AI, designing and manufacturing server solutions for the key players in this field where we've exploited the latest technology to overcome existing computational bottlenecks.

For a long time storage technology hadn't changed much, recently we've seen a massive trend towards the benefits of solid state storage which can increase IO by 1000% over traditional storage technology. Customers of all sizes are benefiting from this massive improvement in performance.

It's such an exciting time in server technology, for one of the leading players in artificial intelligence we were able to design a server which used the latest GPU technology to overcome the bottleneck they had with processing. With our new server solutions, they saw speed increases between 10-100x in their artificial intelligence application, bringing processing times down from days to hours.

For our customers demanding high-performance servers for virtualisation, we've designed multi-node servers which are powerful enough to replace what would have previously required up to 8 individual servers thus saving power, increasing density and reducing TCO.

More and more companies are moving from traditional tier 1 server vendors like Dell and HPE to commodity hardware like Broadberry due to the massive savings on offer for essentially the same kit containing the same leading brand components.

An average savings of around 25% can be achieved on the initial purchase, and upto 50% over the next 3 years. All the forward thinking IIT companies (Google, Facebook, Amazon, Microsoft) all use commodity hardware for their datacentres. (you won't find a Dell or HP in there). Nobody wants to pay a 100% price increase for a Hard drive in a Special Drive Caddy. Broadberry supply all the drive caddies in the initial purchase, so you not treated this way.

Customers have wised up to Vendor lock-ins, and are looking for open solutions, so they are not tied in to long term commitments.

Striving to escape vendor lockins has been a big part of this shift, as well as the fact that TCO is reduced by no longer requiring yearly licences for things like remote management which we include for free. Expanding your solution is also easy with Broadberry as we provide all the caddies with our servers, meaning you won't face up to 300% inflated prices for the exact same drives in the vendor-specific caddies.

The NVIDIA AI foundry service provides enterprises with a comprehensive solution for building custom generative AI models. This includes NVIDIA AI foundation models, the NVIDIA NeMo framework and tools, and the NVIDIA DGX Cloud.

Prominent foundation models, such as Llama 2, Stable Diffusion, and NVIDIA’s GPT-8B, have been strategically optimised to deliver the utmost performance while maintaining cost efficiency. These models serve as the cornerstone for various AI applications, providing a robust framework for generating high-quality outputs at a competitive cost. Their optimisation ensures that enterprises can leverage cutting-edge AI capabilities without compromising on economic considerations, making them an ideal choice for a wide range of projects.

Fine-tune and assess the models by employing NVIDIA NeMo, a specialized framework that allows for meticulous adjustments and evaluations using proprietary data. This robust tool provides a comprehensive environment for refining and validating models, ensuring that they align precisely with the specific requirements and nuances of your unique dataset. With NVIDIA NeMo, you have the capability to optimise models with precision, facilitating the development of highly accurate and tailored generative AI solutions tailored to your organisation's needs.

Simplify the process of AI development by utilising a user-friendly platform specifically optimised for multi-node training. This streamlined solution enhances efficiency and convenience, providing developers with an environment that is easy to navigate while ensuring optimal performance across multiple nodes. The platform is thoughtfully designed to cater to the complexities of multi-node training, offering a seamless and intuitive experience for AI development. This results in accelerated progress and increased productivity for developers working on projects that require the collaborative power of multiple nodes.

Execute it seamlessly in various environments, be it the cloud, the data center, or at the edge. This versatile capability allows you to deploy and operate the system with flexibility, adapting to the specific needs and constraints of different computing environments. Whether you require the scale of the cloud, the centralised control of the data center, or the decentralised processing at the edge, this adaptability ensures that the solution can be employed wherever it is most effective for your particular use case.

NVIDIA AI stands out as the leading platform for generative AI globally, tailored to suit your application and business requirements. Packed with innovations across the entire system, from accelerated computing to crucial AI software, pre-trained models, and AI foundries, it provides the capability to create, personalise, and launch generative AI models for various applications, regardless of the location.

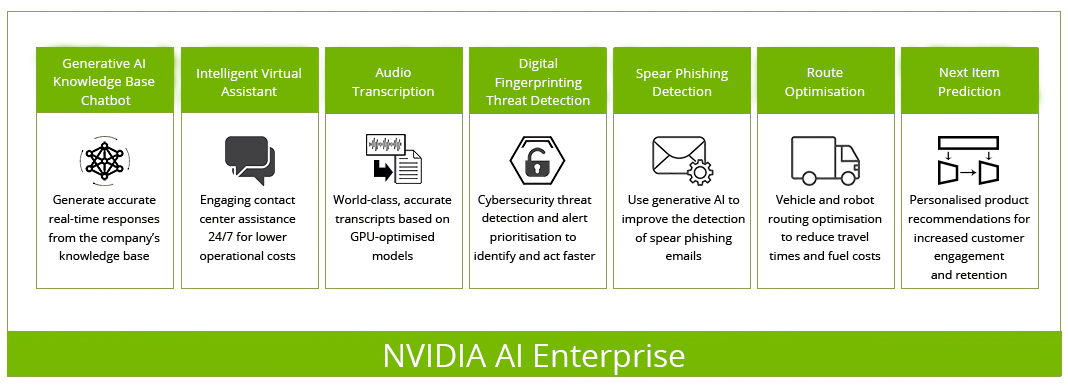

NVIDIA AI Enterprise, accelerates the data science pipeline and streamlines the development and deployment of production AI including generative AI, computer vision, speech AI and more. With over 50 frameworks, pre-trained models, and development tools, NVIDIA AI Enterprise is designed to accelerate enterprises to the leading edge of AI while simplifying AI to make it accessible to every enterprise.

Broadberry's Tensor Core range of HPC servers, powered by NVIDIA® H100 Tensor Core GPU - Provides unparalleled acceleration across all scales, fueling the most advanced elastic data centers globally for AI.

Every industry wants intelligence. Within their ever-growing lakes of data lie insights that can provide the opportunity to revolutionise entire industries: personalised cancer therapy, predicting the next big hurricane, and virtual personal assistants conversing naturally. These opportunities can become a reality when data scientists are given the tools they need to realise their life's work.

NVIDIA® Tensor Core H100 provides up to 20X higher performance over the prior NVIDIA Volta™ generation. Powered by NVIDIA Ampere, the latest GPU architecture, The H100 80GB debuts the world’s fastest memory bandwidth at over 2 terabytes per second (TB/s) to run the largest models and datasets.

Unparalleled AI and graphics performance for the data center, driven by the new NVIDIA Ada Lovelace architecture.

The NVIDIA L40S Tensor Core GPU, Powered by the NVIDIA Ada Lovelace architecture, L40S provides groundbreaking multi-precision performance. This innovation accelerates a diverse array of tasks, including deep learning and machine learning training and inference, video transcoding, AI audio (AU) and video effects, rendering, data analytics, virtual workstations, virtual desktops, and numerous other workloads.

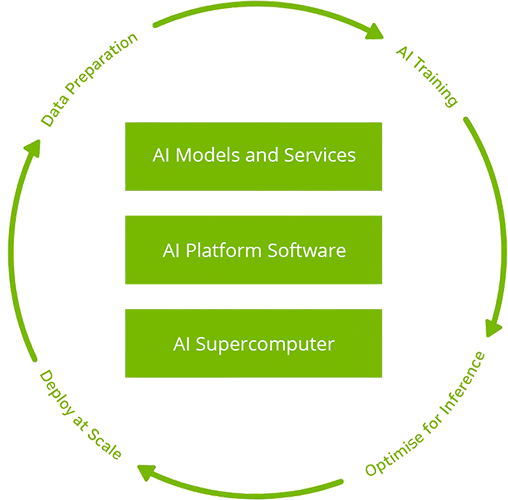

The NVIDIA EGX™ platform includes optimised software that delivers accelerated computing across the infrastructure. Through the utilisation of NVIDIA AI Enterprise, businesses gain entry to a comprehensive, cloud-native suite of AI and data analytics software. This suite is not only optimised for performance but also certified and fully supported by NVIDIA to seamlessly operate on VMware vSphere with NVIDIA-Certified Systems.

NVIDIA AI Enterprise incorporates essential enabling technologies from NVIDIA, facilitating the swift deployment, efficient management, and scalable operation of AI workloads within the contemporary hybrid cloud environment. This integrated solution serves as a robust foundation for businesses seeking to harness the full potential of artificial intelligence within their operational frameworks.

Notre Procédure de Tests rigoureuse

Notre Procédure de Tests rigoureuseAvant de quitter nos ateliers, toutes les solutions de serveur et de stockage Broadberry sont soumises à une procédure de test rigoureuse de 48 heures. Ceci, associé à un choix de composants de haute qualité, garantit que toutes nos serveurs et solutions de stockage répondent aux normes de qualité les plus strictes qui nous sont imposées.

Une Flexibilité Inégalée

Une Flexibilité InégaléeNotre principal objectif est d'offrir des serveurs et des solutions de stockage de la plus haute qualité. Nous comprenons que chaque entreprise a des exigences différentes et sommes en mesure d'offrir une flexibilité inégalée dans la personnalisation et la conception de serveurs et de solutions de stockage.

Nous nous sommes imposés comme un incontournable fournisseur de stockage en Europe et fournissons depuis 1989 nos solutions de serveurs et de stockage aux plus grandes marques mondiales. Quelques exemples de clients :